Shay Perera and Ronen Lavi spent decades in the intelligence units of the Israel Defense Forces. Ronen built and led the IDF’s AI lab for 24 years. Shay spent a decade taking fragmented, chaotic data from multiple sources and turning it into something a human being could act on in real time. Together they received Israel’s National Security Award in 2018.

Then Shay looked at his father-in-law.

Micha had been complaining about back pain and sleep issues for over two years. He saw his family doctor every few months. He was prescribed muscle relaxants and physical therapy. Nothing worked. Eventually, when the symptoms simply wouldn’t resolve, the doctor took a harder look at the chart.

What he found was devastating. An abnormal PSA test result had been sitting there the whole time. Overlooked. Right there, visible to anyone who looked. Nobody had looked. Micha was rushed for tests. He had prostate cancer.

Shay recognized it instantly. Not as a medical failure. As a data problem, the exact kind he had spent years solving in Israeli intelligence. Fragmented systems. Critical signals buried in noise. The wrong things getting missed because no human could process it all fast enough.

He and Ronen left the IDF and built Navina. Their mission, stated simply on their website: No More Michas.

Micha’s Story Isn’t Rare. It’s Tuesday.

Ronen, the CEO, tells a story about the early days. Two Israeli entrepreneurs walking into rooms full of American healthcare executives, pitching a solution to American medicine. He’d laugh about it: “Who are these two Israeli entrepreneurs who think they can fix the American healthcare system? They’ve never even been treated in it.”

He said it with self-awareness. And then they fixed it anyway.

Because the problem they identified wasn’t American or Israeli. It was human. A primary care physician today sees 20 patients, each with a medical history scattered across disconnected systems that were never designed to talk to each other. Labs here. Imaging there. Specialist notes somewhere else entirely. Every answer exists. Nobody has time to find it.

Doctors spend two hours on documentation for every single hour of direct patient care. More than half are experiencing burnout. And when you ask what’s driving it, the answer isn’t the patients. It’s never the patients.

Micha’s answer was in the chart. It’s almost always in the chart.

What They Built

Navina does what Ronen and Shay spent their careers doing in a different context. It takes fragmented data from multiple sources and turns it into something a physician can act on before they walk into the room.

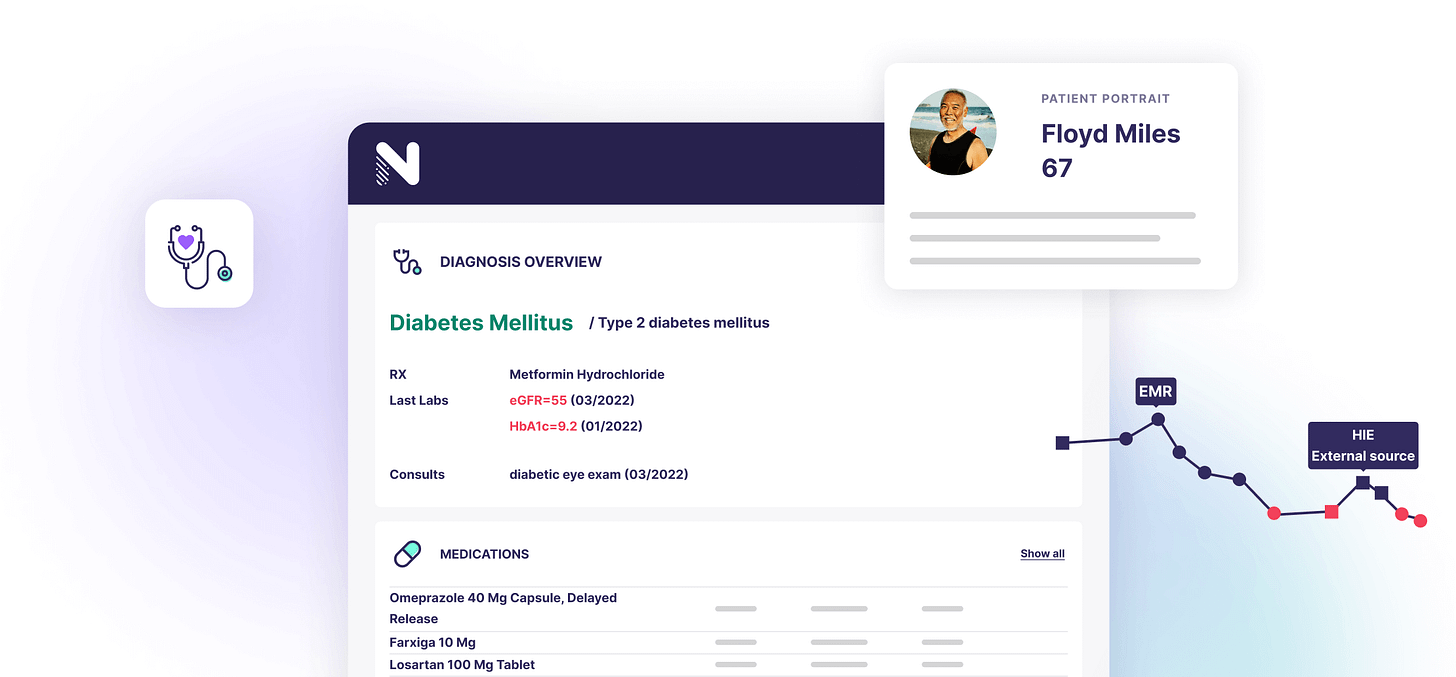

They call it the Patient Portrait. Before the appointment, Navina pulls from major EHR systems, health information exchanges, insurance claims, imaging reports, and consultation notes and puts it all into one coherent clinical picture. Not a data dump. A portrait. The full person, assembled and readable, waiting for the doctor before she touches the door handle.

Then the suspected conditions engine goes further. It reads across all the data, labs, imaging, medications, vitals, free text notes, and surfaces chronic conditions that were never explicitly documented. Conditions sitting in the data for years, hiding in plain sight, that no physician caught because no physician had the bandwidth to look across all of it at once.

Independent research measured what that actually means in practice.

Phyx Primary Care, a nonprofit innovation lab, studied 120 physicians across 19 practices for a full year. Chart review time for complex patients dropped by 40%. Physician burnout fell by 32%. 84% of physicians said they’d recommend Navina to a colleague.

For the second consecutive year, Navina ranked number one in the KLAS Awards for clinical digital workflow. KLAS is the healthcare industry’s most trusted independent evaluator, based on direct interviews with thousands of practicing clinicians. Not a startup’s marketing deck.

The care gap engine runs alongside all of this, flagging the colonoscopy three years overdue, the vaccination missed, the screening that keeps getting pushed. Everything surfaces inside the workflow the doctor already uses. Nothing new to learn. No separate login. The Portrait is just there when the doctor is ready.

What This Looks Like for Doctors

At Primus Health Network in Florida, 93% of providers open Navina every single week. 95% of the AI’s suggestions are reviewed and addressed by clinicians. 77% are added directly into patient records. That isn’t a tool doctors tolerate. That’s a tool doctors trust.

What does it actually change in the room? The doctor walks in already knowing her patient. She knows the suspected conditions. She knows the overdue screenings. She knows the lab result from three years ago that nobody connected to anything yet. She has twelve minutes and she uses all twelve of them on medicine, not on trying to reconstruct a history she should already know.

She doesn’t stay late. She goes home. Her family gets her back.

Now scale that across 10,000 clinicians and 1,300 clinics. For the rural physician with no support staff, Navina delivers the same pre-appointment intelligence as a doctor at a major academic medical center. For the specialist, the referral arrives with the complete clinical picture instead of a two-page summary that’s missing the last three years. For the health plan, patients who need intensive care get properly identified and funded, because the coding that determines how sick a patient is on paper finally reflects how sick the patient actually is.

If You’re Worried About the Risks of AI in Medicine, So Are They

Here’s what physicians actually worry about. Not in a conference panel. In the exam room.

The first worry is accuracy. What if the AI flags something that isn’t there? Every suggestion Navina surfaces is linked back to the original clinical evidence, the specific lab result, the imaging report, the note from three years ago. The doctor sees exactly where it came from. The doctor confirms or dismisses. Always. This isn’t a black box saying trust me. It’s a colleague pointing at the chart and saying look at this.

The second worry is liability. If Navina surfaces a suspected condition and the physician reviews it and moves on and the patient later presents with exactly that condition, what happens in court?

The honest answer is that the legal landscape around AI in medicine is still unsettled. No framework yet distributes responsibility between physician and tool. Under U.S. malpractice law right now, the physician is still the physician. The decision is still the doctor’s. That isn’t a flaw in Navina’s design. That’s the whole point of the design. Navina was built as a decision support tool, not a diagnostic tool. It shows the doctor what’s there. The doctor decides what to do about it.

The third worry is bias. An AI trained on one patient population may fail another. Navina publishes its monitoring approach: diverse training data, continuous bias assessment, ongoing refinement. It isn’t a solved problem anywhere in the industry. But it’s one Navina treats as architecture, not an afterthought.

Ronen and Shay came from work where a missed signal isn’t a billing dispute. It’s catastrophic. They built the tool with that standard in mind.

The Pattern

There’s a version of Israeli innovation that gets written about constantly. The exits. The unicorns. The Startup Nation narrative. That’s not what this is.

This is two people who watched someone almost die from a preventable failure, recognized the problem from their own careers, and spent years building the fix. Not for Israel. Not for a valuation. For every patient whose answer is sitting in a chart somewhere, waiting for a doctor who finally has time to see it.

Israeli engineers have a habit of looking at broken systems and deciding that’s not acceptable. Not because of national character. Because the discipline that comes from working in environments where getting it wrong is not an option, where you build for edge cases because the edge cases are the whole point.

Ronen and Shay brought that discipline to American primary care. The doctor they served didn’t know she was the beneficiary of decades of intelligence-grade data engineering. She just walked into a room and, for the first time, already knew her patient.

No More Michas.

Built in Israel. For everyone.

To Ronen, Shay, and the entire Navina team: you turned a personal loss into something the world needed. Thank you.

There’s a version of AI in medicine that makes things worse. Tools that promise efficiency and deliver distraction. That document faster while seeing less. What Navina built is the opposite. It works before the appointment begins, so that when the doctor walks in, the twelve minutes she has are entirely about medicine.

Learn more at navina.ai